More than half of organizations struggle to keep data accurate and consistent. Less than 40% of leaders report high confidence in their own numbers. Yet the pressure to deliver AI-driven insights – in real time – has never been higher.

That was the starting point for Inetum’s webinar SAP Business Data Cloud & Databricks: Unlock AI & Data potential, which brought together practitioners from Inetum and special guests from SAP and Rolls-Royce. The session featured Jan Tretina (Ecosystem Development Manager, SAP), Sebastian Stefanowski (Databricks Practice Leader, Inetum), Raul Muñoz-Gutierrez (SAP Analytics Business Director, Inetum), and Andrew Lager (Program Manager and Digital Delivery Manager, Civil Digital and IT, Rolls-Royce), hosted by Oleh Hudym (SAP Growth Manager Manager, Inetum).

- 1. Less than 40% of leaders trust their own data – and that gap stalls AI

- 2. What SAP Business Data Cloud is – and how it closes the trust gap

- 3. Migration: BDC works with what organizations already have

- 4. Data products eliminate the 80% of a project that adds no value

- 5. SAP Databricks and standalone Databricks: the same engine, different purpose

- 6. Six years with Databricks at Rolls-Royce: 50% cost reduction and AI for every analyst

- 7. What AI on SAP data actually looks like

- 8. Flexible consumption: licensing that moves with the organization

- 9. What is coming in SAP Business Data Cloud in 2026

- 10. How CFOs should measure ROI on SAP Business Data Cloud

- 11. How to start – without committing to a licence first

Less than 40% of leaders trust their own data – and that gap stalls AI

“Less than 40% of leaders report high confidence in their data,” Jan Tretina said at the opening of the session. “That gap directly impacts both decision speed and innovation.”

Three issues sit behind this figure. First, data quality: over half of organizations struggle to keep data accurate and consistent across systems. Second, misalignment between IT and business – finance teams need insights quickly, but IT landscapes can’t always deliver at that pace. Third, fragmentation: data spread across multiple systems, bringing it together in real time remains a major pain point.

The business consequence is concrete. Data-oriented organizations – those that have solved the trust problem – are, according to SAP’s analysis, four times more likely to succeed.

What SAP Business Data Cloud is – and how it closes the trust gap

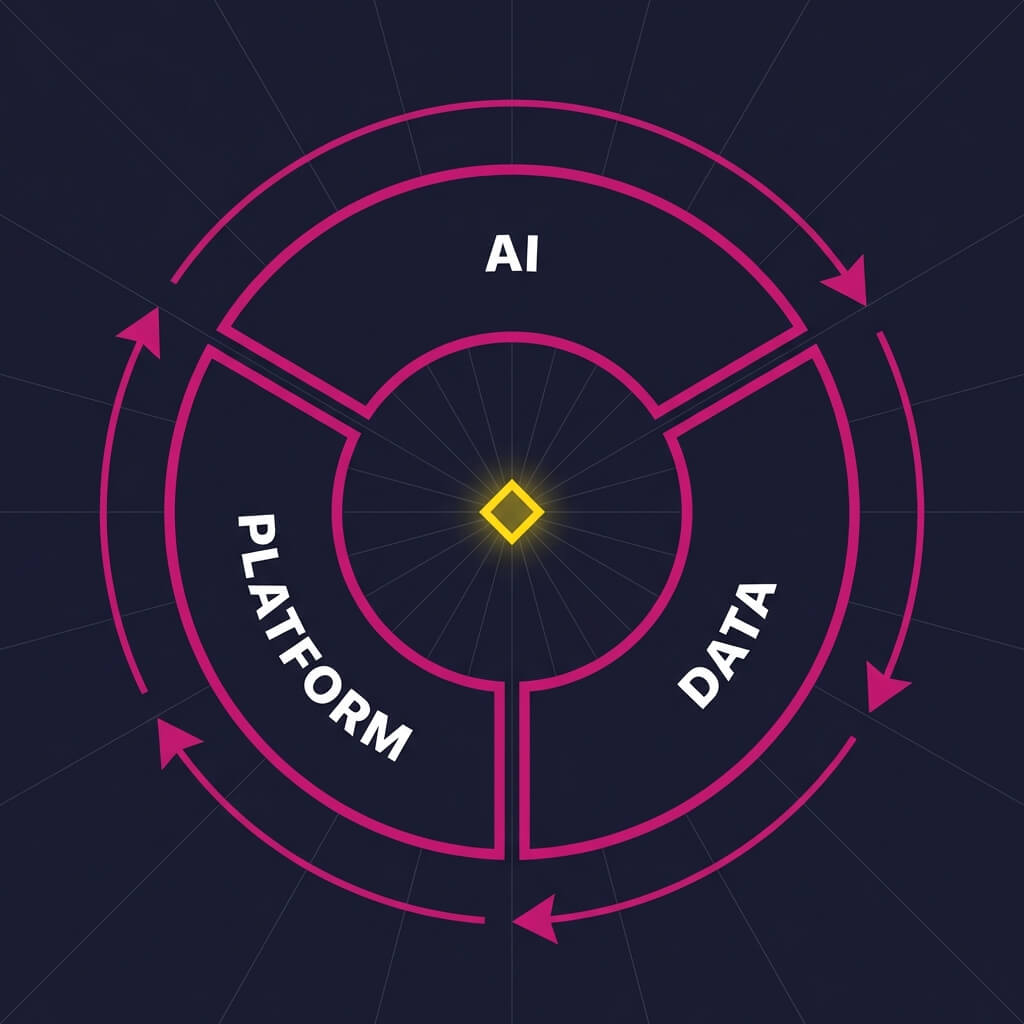

Jan Tretina described SAP Business Data Cloud through a flywheel: AI is only as strong as the data behind it, data is only valuable when it is trusted and accessible, and both require a resilient platform underneath.

“SAP Business Data Cloud is our unified data foundation,” he said. “It brings clean, connected, and trusted business data together – unifying data across SAP and non-SAP systems and making that data immediately usable for AI, analytics, and planning.”

BDC consolidates SAP data services – SAP BW, SAP Datasphere, SAP Analytics Cloud, and extension partners including Databricks and Snowflake – under a single platform. The operational impact Tretina highlighted: instead of maintaining thousands of pipelines, custom integrations, and shadow data, BDC provides one consistent data foundation across all use cases. It embraces non-SAP data, open standards, and a broad partner ecosystem, letting organizations build multi-vendor landscapes without losing control of their data. This architecture makes BDC relevant across sectors – from retailers to manufacturing to financial services and banking.

Migration: BDC works with what organizations already have

A recurring concern when evaluating any new platform is the cost of transition – years of investment in SAP BW, Datasphere, or Analytics Cloud environments that can’t simply be discarded.

Raul Muñoz-Gutierrez addressed this directly. BDC includes all SAP data services within a single platform and is designed to absorb existing environments, not replace them. For SAP BW, BDC offers a “lift and shift” migration path – the existing environment moves in with data and connections preserved.

For SAP Datasphere or SAP Analytics Cloud, a “rewiring” process brings those solutions under the BDC umbrella without rebuilding. “All these tasks don’t require any work from the customers,” Muñoz-Gutierrez confirmed. “They can be carried out directly by SAP.”

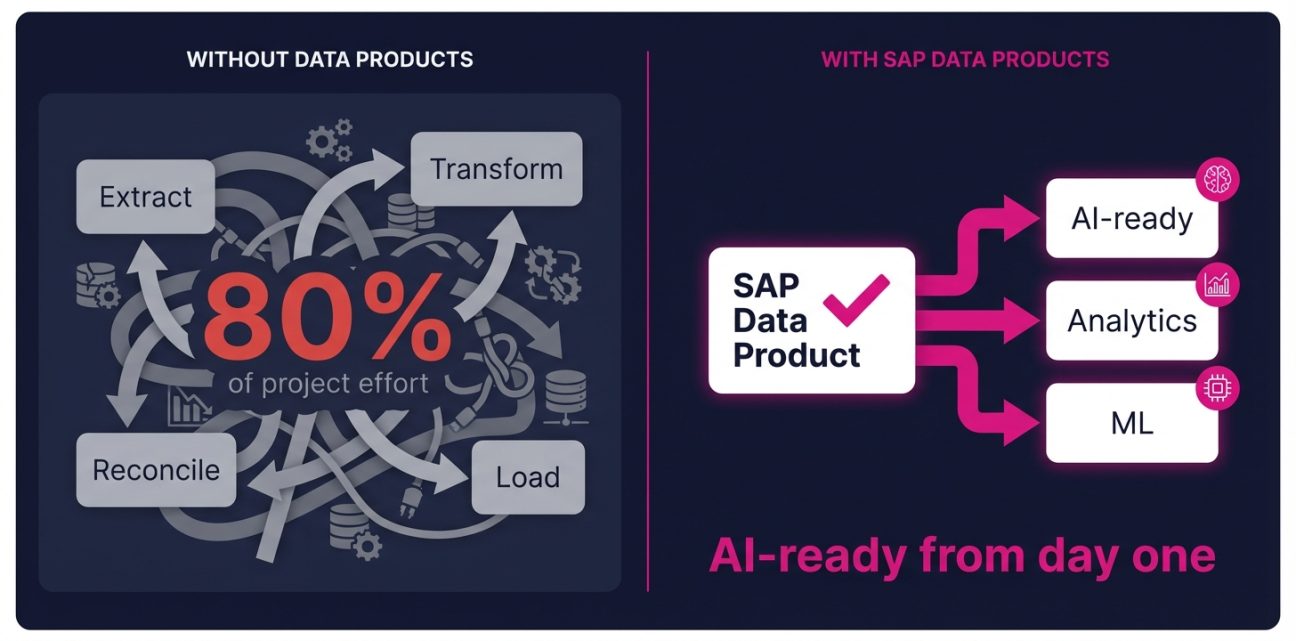

Data products eliminate the 80% of a project that adds no value

“Do you know the effort and time required in a data project to perform data extraction, loading and transformation, as well as reconciling all the information?” Raul Muñoz-Gutierrez asked during the session. “All that work, that normally is the 80% of the project, is partially eliminated through a SAP data product.”

SAP data products contain the main data from key functional areas – financial, HR, supply chain – and they inherit all the data semantics from SAP. This makes them usable not just for reporting, but for AI and machine learning development from day one.

For CFOs specifically, the Financial Intelligence Package activates intelligent applications on SAP Analytics Cloud while simultaneously triggering data loading from SAP S/4HANA into BDC data products. “Regularly every 30 minutes, the data is going to travel from our SAP S/4 system to our BDC,” Muñoz-Gutierrez explained. Treasury management, financial planning, and forecasting become available and AI-ready from the moment of activation – with the option to build custom AI and machine learning on top.

SAP Databricks and standalone Databricks: the same engine, different purpose

If Databricks is already in the organization’s stack – or under evaluation – a natural question arises: how does SAP Databricks within BDC relate to the standard Databricks platform?

Sebastian Stefanowski, whose team works with both, explained the distinction. SAP Databricks is a modified release of the Databricks platform, tightly integrated with BDC – authentication, authorization, and billing are all managed through SAP, using SAP compute units. The most important aspect of the integration, in Stefanowski’s words, is a dedicated connector enabling zero-copy data sharing between SAP data products and the Databricks catalog: SAP financial data becomes directly queryable in Databricks without replication or transformation overhead.

“If SAP data is at the heart of your business, and financial data is really central to what you do, then SAP Databricks is definitely worth serious consideration,” Stefanowski said. “It would enable most of the useful features of Databricks with, generally speaking, no technological barrier.”

The trade-off is feature breadth. Organizations with IoT streaming requirements, multi-stage declarative pipeline orchestration (DLT), or a need to manage their own compute clusters will find standalone Databricks richer – with dedicated connectors for external cloud services and platforms like Salesforce. In SAP Databricks, those broader integrations run through SAP. Stefanowski’s summary was clear: for general data integration scenarios, standalone Databricks; for organizations where SAP financial data is the core, SAP Databricks removes the barriers.

“What seemed to be impossible a few years back in terms of AI capabilities is now possible with this amazing partnership between SAP and Databricks,” Oleh Hudym noted during the session.

Six years with Databricks at Rolls-Royce: 50% cost reduction and AI for every analyst

Andrew Lager has been on the Databricks journey at Rolls-Royce for over six years, including as an early adopter of many of its latest technologies – and he came to the webinar with numbers.

“We’ve reduced costs upwards of 50% compared to our previous solution,” he said, referring to the migration from a legacy warehouse to Databricks Lakehouse for engine health monitoring data. Three factors drove that reduction: cheaper compute via Spark, schema evolution that prevents jobs from breaking when table structures change during migrations, and Unity Catalog – which centralizes data access across sources so Lager can produce management information for internal stakeholders without involving additional teams.

Webinar on demand

The full Rolls-Royce story – and how to replicate it

Andrew Lager walks through six years of Databricks at Rolls-Royce – the architectural decisions, the AI features that changed daily operations, and the live Q&A with SAP, Databricks, and Inetum practitioners.

Watch the recording →On the AI side, his example was equally direct. “Those activities that would take me days can take me five minutes now,” he said, describing Databricks AI Genie – a feature that lets non-technical users query datasets in natural language instead of SQL.

“The biggest benefit, not just for me but for Rolls-Royce in general, has been opening up technical solutions to less technical people.”

What AI on SAP data actually looks like

Sebastian Stefanowski described Databricks as a complete environment for AI and machine learning, where SAP BDC data products are exposed as tables that plug directly into data pipelines. He grouped the platform’s AI and ML capabilities into several practical areas:

- AI Playground – run any off-the-shelf or externally sourced model on a serverless runtime; create custom prompts and test hypotheses before committing to a build

- Model serving endpoints – host and serve custom models at scale

- AI agent framework – build agentic solutions quickly, with a built-in vector search database for RAG implementations

- MLflow – manage and monitor the full training lifecycle; compare experiments and select the best-performing model

- AutoML – Databricks runs parallel experiments across multiple model architectures on your data automatically, then selects the model that best fits your test results

- AI functions in SQL – call AI models directly from a SQL SELECT statement and store predictions as part of the query output

- AI Gateway – govern model usage centrally: block unauthorized access, filter sensitive queries, and maintain full oversight of what models run and on what data

He then connected those capabilities to the most common use cases for SAP data: cash flow forecasting, prices forecasting, and stock level optimization – blending SAP financial and operational data with seasonality data, interest rate history, and interest rate predictions to anticipate future costs, prices, and inventory needs. Databricks AutoML fits naturally here, running statistical algorithms in parallel and surfacing the model that best fits historical test data.

Beyond forecasting, LLM integration opens a second category: automatically generating product descriptions from structured SAP product data; running sentiment analysis on customer feedback to understand the reasoning behind customer behavior; and identifying the most promising customers to approach based on that analysis.

“All these features can be really, really nicely integrated with SAP and blended with SAP data,” Stefanowski said. “I think that’s something which will bring SAP customers to another level.”

Flexible consumption: licensing that moves with the organization

Raul Muñoz-Gutierrez described the BDC commercial model as one of its differentiators. “SAP Business Data Cloud offers a unit subscription model that provides customers with flexible subscription pricing,” he said. “This allows them to subscribe to the services they currently need and easily modify them in the future.”

The example he gave: an organization starts with SAP BW integrated into BDC. Once that environment has been migrated to SAP Datasphere, the BW capacity is removed and reallocated – to SAP Databricks or SAP Snowflake, for instance. A separate data services licence layer complements the platform subscriptions, enabling activation of analytical applications with near real-time data, development of custom data products, and external data sharing via BDC Connect – the service that exposes SAP data to Databricks, Snowflake, and Microsoft Fabric through Delta Sharing.

What is coming in SAP Business Data Cloud in 2026

Jan Tretina outlined SAP’s roadmap across three areas.

First, deeper openness and connectivity: BDC Connect is expanding to Databricks, Snowflake, Google Cloud, Microsoft Fabric, and AWS. Bi-directional data sharing with SAP HANA Cloud is also in development – data flowing both ways, removing silos at the source rather than managing them downstream.

Second, data products: SAP is expanding coverage across more lines of business and releasing Data Product Studio, which will make modeling SAP and non-SAP data into governed, shareable data products “much easier than ever before” – turning data into “a true asset others can consume and trust,” in Tretina’s words.

Third, AI-native capabilities built directly into the data layer: a new AI Hub with new models, enhancements to the SAP HANA Cloud Knowledge Graph, and a fully agentic multi-modal database – including memory for agents. “This is the database AI was really looking for,” Tretina said.

“We are building the data foundation every enterprise will need to power next-generation AI. 2026 will be a breakthrough year for BDC.”

How CFOs should measure ROI on SAP Business Data Cloud

In the Q&A, Jan Tretina identified four areas where BDC generates measurable returns for finance leaders.

Efficiency and proactivity. Fewer manual reconciliations, reduced data preparation effort, faster close-to-forecast cycles.

Data trust. Higher data quality and fewer errors – directly addressing the confidence gap he described at the start of the session.

Agility. Faster time to insight and quicker scenario iterations.

Business impact. Improved forecast accuracy and tangible cost savings from AI-driven recommendations.

“CFOs should look at gains in proactivity, trust, and measurable financial outcomes,” Tretina said. “With SAP BDC, the ROI is visible both in operational efficiency and in the quality of decisions which can power the business.”

How to start – without committing to a licence first

For organizations that want to evaluate BDC before committing to a full rollout, Raul Muñoz-Gutierrez described a concrete entry point. Inetum has three years of experience with SAP Datasphere and related environments, and operates its own SAP Business Data Cloud environment – available to run proof-of-concept scenarios with customers in a live setting, testing whether a target architecture fits their real needs before any licence investment.

“You don’t have to invest in a licence right now,” Muñoz-Gutierrez said. “You have to know what you want, and we are the best company to accompany you to this final scenario – because we have SAP Data Specialists and also global data expertise from non-SAP solutions.”

On the Databricks side, Sebastian Stefanowski was unambiguous: “Databricks is for everyone.” The platform runs on a pay-per-use model – if workloads run once a day for an hour, the cost reflects that hour. If the platform is idle, the cost goes to zero. “The openness and scalability of this platform – which actually scales down to zero – that is what should encourage any company who wants to start cloud data processing,” he said.

The message across the webinar was consistent: before AI delivers value at scale, organizations need a data foundation that is clean, connected, and trusted. SAP Business Data Cloud is built to be that foundation. Databricks extends what can be done on top of it. And when the two are combined with the right approach, the gap between fragmented enterprise data and working AI closes faster than most organizations expect.

The full session is available on demand.

SAP, Databricks, Rolls-Royce, and Inetum practitioners covered migration strategy, data product architecture, and AI implementation – including the unedited live Q&A. Watch the webinar on demand →

To run a proof of concept in Inetum’s own SAP Business Data Cloud environment – without upfront licence commitment – talk to our team →

- 1. Less than 40% of leaders trust their own data - and that gap stalls AI

- 2. What SAP Business Data Cloud is - and how it closes the trust gap

- 3. Migration: BDC works with what organizations already have

- 4. Data products eliminate the 80% of a project that adds no value

- 5. SAP Databricks and standalone Databricks: the same engine, different purpose

- 6. Six years with Databricks at Rolls-Royce: 50% cost reduction and AI for every analyst

- 7. What AI on SAP data actually looks like

- 8. Flexible consumption: licensing that moves with the organization

- 9. What is coming in SAP Business Data Cloud in 2026

- 10. How CFOs should measure ROI on SAP Business Data Cloud

- 11. How to start - without committing to a licence first